Update: Schrep (I’m far back right, he’s in the middle…note the ripped shirt, sorry Schrep!) was kind enough to point out that, as of bug 423377 being resolved, Firefox 3 defaults to 6 simultaneous connections. Modern browsers all use different numbers, the lowest being IE 7 with 2 (all older browsers also use 2).

One of my projects here at Mozilla (and, coincidentally, a past project at Yahoo!) was improving ySlow scores. ySlow is a utility that measures load time and analyzes page performance, assigning you a final letter grade based on various performance metrics. It’s a neat little Firebug plugin, and I highly suggest that any web developer install it.

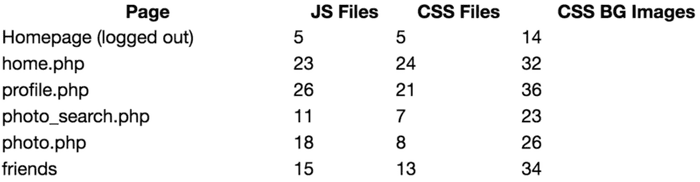

Occasionally I like to play with this tool on other big sites, just to see how many of them actually care about such things. So I went through and ran ySlow on some of the more common Facebook pages. Here’s what I found:

With most aspects Facebook does a decent job: with the exception of advertiser scripts and some application-specific code they use etags, minify their JS, and use long expires headers. What amazed me is the number of JS and CSS files on each page, all listed one after another in the header:

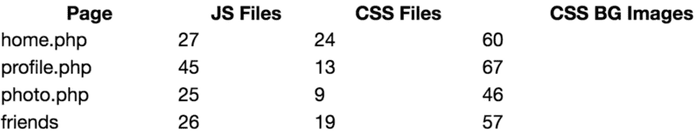

And for those curious, here’s the count for the new facebook design (summary: significantly worse):

Really, I can’t think of any context in which 47 external files would be necessary! I understand breaking files up by purpose to make coding and revision management easier, but I wonder if someone at some point considered the speed impacts. I’m fortunate enough to almost always have a broadband connection, but the experience for their dial-up users is probably deplorable. Especially considering that they now localize the site and are pushing to expand overseas, you would think this would be a much higher priority. And don’t even get me started about the lack of spriting!

Here’s how I setup Mozilla’s JS/CSS concatenation (see the Build Process):

- Add a configuration setting for site state (example: a flag set to “production” on production servers, “dev” on everything else)

- All CSS/JS calls use these flags to decide if they go to the concatenated files or the actual development files

- Create a build script that generates the concatenated files (profile.js, photo.js, etc), run before pushing to production

Ironically enough I would bet Facebook already has #1 and #2 setup, since they use Akamai for production servers, and can’t use that for development.

Alternatively they could use the method YUI uses for serving JS files. Basically call a script that will return the concatenated files. It’s a less elegant solution, and is heavier on the server, but still better than nothing.

Note that this doesn’t only affect dial-up users. While broadband users usually have a fast enough connection to offset the slowdown, a large file count is the biggest slowdown for broadband. This is because of the 2-simultaneous-connection limit that most browsers obey. From rfc2068:

Clients that use persistent connections SHOULD limit the number of simultaneous connections that they maintain to a given server. A single-user client SHOULD maintain AT MOST 2 connections with any server or proxy.

Facebook.com is the 8th most popular site on the web, while Mozilla.org is the 258th (note that this is all of mozilla.org, not just addons.mozilla.org). They should be able to devote a lot more to the tail end of their users, especially considering the residual benefits for their main audience.

Just as bad is their lack of proper fallback for those with JS disabled. For example, if you were to disable JS, you can still login fine, but once you login, head back to facebook.com. That’s right, they use JS to redirect users from their homepage, with no